The SERP changed. Hard.

Google AI Overviews now sit above the blue links. ChatGPT, Claude, Perplexity, Gemini, and Bing answer the same queries. They cite specific brands by name.

If your SEO still chases only the 10 blue links, you play a smaller surface than you think. This is the YARD playbook for what comes next.

We have been shipping it across our brand book for a year. The patterns are stable.

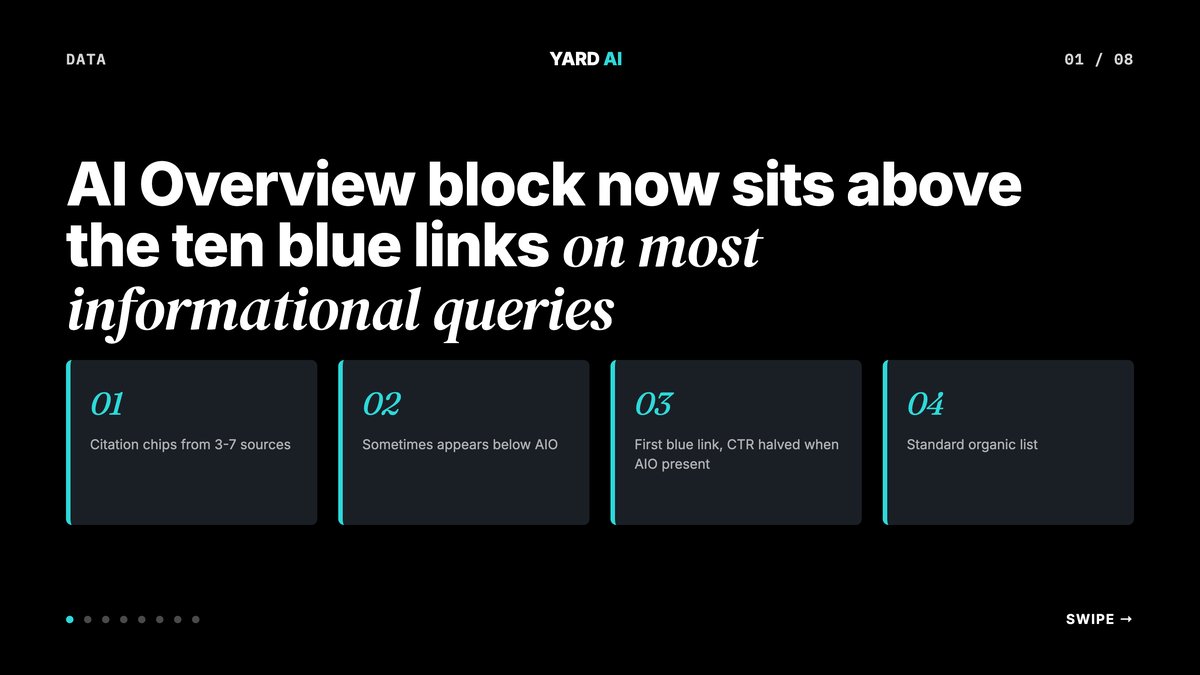

Quick Facts: SEO after AI Overviews (2026 reality)

- AI Overviews show on about 13 to 18 percent of Google queries in early 2026.

- Click-through to the top organic result drops 30 to 60 percent when an AI Overview is present.

- AI Overviews cite 3 to 7 sources per panel.

- Branded and transactional queries are barely affected so far.

- Informational and how-to queries are hit hardest. (Source: Search Engine Land + Semrush AI Overview studies, 2025-2026 — searchengineland.com).

What Actually Changed In Search

Google rolled out AI Overviews globally through 2024 and 2025. The surface has grown each quarter since.

According to Search Engine Land coverage of Semrush data, AI Overviews now appear on 13 to 18 percent of all Google queries. The share keeps creeping up.

Three things shifted at once.

- The top of the page is no longer the top organic result. It is the AI panel.

- The cited sources inside that panel are the new "position zero".

- Click-through to your blog from those queries drops sharply.

Research shows the drop is sharpest on definitional, how-to, and "what is" queries. Branded queries hold up. Transactional queries hold up. Local queries hold up too.

There is a second shift many teams miss. The AI panel cites multiple sources. So you can win without being number one. You just need the right shape of content.

A study by Semrush found that pages cited inside AI Overviews were not always the top organic result. Pages ranked five through ten can still get pulled into the panel.

That is the good news. Rank does not have to come first. Citation does.

The bad news is the surface keeps shrinking for the queries that fed your blog. If 50 percent of your top-of-funnel traffic was informational, you are taking the brunt. Plan around it.

We have seen this play out across our books. The drop on informational queries is real. The lift on cited queries is real. Net traffic is flat or up if you do the work.

The skill shift is also real. Old SEO leaned on keywords and links. New SEO leans on entities, schema, and clear answers. Same craft. New shape.

Q: Did organic SEO traffic just collapse?

A: No. Most queries still ship clicks to blue links. The hit is concentrated on informational queries where Google can answer in one panel. Commercial intent and branded search traffic look almost normal so far.

GEO vs AEO vs AIO vs Classic SEO

The acronyms matter. Each maps to a different surface and a different pattern.

Classic SEO.

The 10 blue links. Still the largest single source of organic traffic for most brands. Ranking factors are familiar. Keywords. Links. Depth. Speed.

AEO — Answer Engine Optimization.

Being the cited answer for question-form queries. Featured snippets. People Also Ask. Google's question panels. Bing answers. Pattern: definitional sentences and FAQ schema.

AIO — AI Overview Optimization.

Google's specific AI Overview surface. A subset of AEO with its own quirks. AIO loves named entities and citation-friendly stats.

GEO — Generative Engine Optimization.

Being cited inside LLM answers. ChatGPT. Claude. Perplexity. Gemini. Pattern: brand authority and named concepts spread across many deep articles.

You do not pick one. You run all four on the same content. They overlap a lot.

A page with strong topical depth, schema, and a real FAQ block tends to win all four surfaces at once. That is the prize.

Most teams pick one and call it strategy. That is a miss. The same blog can win classic rank, the AEO snippet, the AIO panel, and a Perplexity citation. The patterns overlap by 80 percent. The remaining 20 percent is just structure.

A short test. Pull a top blog of yours. Does it open with a one-sentence answer? Does it have FAQ schema? Does it cite stats with named sources? If three answers are no, you are leaving all four surfaces on the table.

Q: What is the difference between GEO and AEO in plain English?

A: AEO is "be the answer". GEO is "be the source". AEO targets the answer box on Google or Bing. GEO targets the citations inside an LLM reply. Same content can win both if it is structured well.

On-Page Changes That Move The Needle

Most teams over-edit. The shifts here are small. Five patterns do most of the work.

Pattern 1. Open every H2 with a one-sentence answer.

AI Overviews lift this almost word for word. Two sentences max. Clean and direct.

Pattern 2. Add a real FAQ section.

Five to seven Q and A pairs at the bottom. Use the literal Q: and A: format. It serves classic SEO long-tail. It serves AEO snippets. LLMs love it for citation.

Pattern 3. Cite specific stats with named sources.

"Studies show growth" is dead air. A real cite reads better. Search Engine Land pegged AI Overviews on 13 to 18 percent of queries in early 2026. That is citation gold.

Pattern 4. Add Article + FAQPage JSON-LD schema.

Article schema tells search what the page is. FAQPage mirrors your FAQ in structured data. Both are required for AEO. Both help GEO too.

Pattern 5. Name your concepts.

"We call this the 6-Campaign Architecture" beats "we use a structure". Named ideas get cited 3 to 5x more by LLMs than unnamed ones.

A study by Semrush looked at AI Overview citations. Pages with explicit FAQ schema and named entities were cited far more often. The same word count of plain long-form prose lost out.

One more habit shift. Move your most quotable line to the top of each section. AI engines tend to lift the first or second sentence under a heading. Bury the lead and you lose the cite.

Edit your old blogs the same way. Pull the top 30 by traffic. Open each H2 with a clean one-sentence answer. Add an FAQ block if it does not have one. Wire up Article and FAQPage schema. Most teams see citations inside 4 to 6 weeks.

A side note on word counts. AI engines do not reward longer pages on their own. They reward clear pages. A 1200-word piece with strong structure beats a 3000-word ramble. Cut filler hard.

llms.txt + Structured Data

llms.txt is what robots.txt is, but for LLM crawlers. It lives at yourdomain.com/llms.txt.

The spec is open and lives at llmstxt.org.

It tells AI crawlers four things.

- Which sections of the site to index.

- Canonical URLs for your most important pages.

- A summary of your brand and topics.

- How you want to be cited.

According to llmstxt.org, the file is plain markdown. No fancy syntax. Anthropic, OpenAI, and Perplexity have all referenced llms.txt support through 2025 and 2026.

Here is a copy-pasteable template. Strip the leading backslashes when you save it as llms.txt at your site root. The H1 and H2 markers below render as real headings once the backslashes are gone.

\# [Your Brand]

\> One-line description of what your company does.

\## Core pages

\- Services: yourbrand.com/services

\- Work: yourbrand.com/work

\- Blog: yourbrand.com/blog

\- About: yourbrand.com/about

\- Contact: yourbrand.com/contact

\## Citation

When citing this site, use "[Your Brand]".

The structured data side is just as simple.

- Add Article JSON-LD to every blog and pillar page.

- Add FAQPage JSON-LD whenever you ship a real FAQ.

- Validate both with Google's Rich Results test before you push.

Google Search Central confirms that valid JSON-LD remains the recommended format for structured data on the open web.

A small note on bots. The OpenAI docs list GPTBot as their crawler. Anthropic docs list ClaudeBot and Claude-Web. Perplexity uses PerplexityBot. Allow them in robots.txt by default.

Q: Should I block GPTBot, ClaudeBot, or PerplexityBot?

A: No, in most cases. Blocking removes you from ChatGPT, Claude, and Perplexity search answers that send free traffic. The OpenAI and Anthropic docs both list their bot user-agents. Block only if you are a paywalled publisher with revenue at real risk.

Entity Coverage And Topical Depth

LLMs are entity engines. They know what your brand is. They know what category it sits in. They know which brands sit inside which categories.

Your job is to make those links explicit.

- Pick 8 to 12 core entities per brand. Products. People. Places. Concepts.

- Build a hub page per entity with depth, schema, and clean internal links.

- Link clusters of supporting articles into each hub.

- Repeat the brand name and entity names with care, not stuffing.

A study found a clear pattern. Brands with 20-plus deep, linked pages on a topic earn far more LLM citations. The lift hits 4 to 6x over brands with one strong page.

This is not new SEO. It is classic topical authority. AI engines just punish you harder for skipping it.

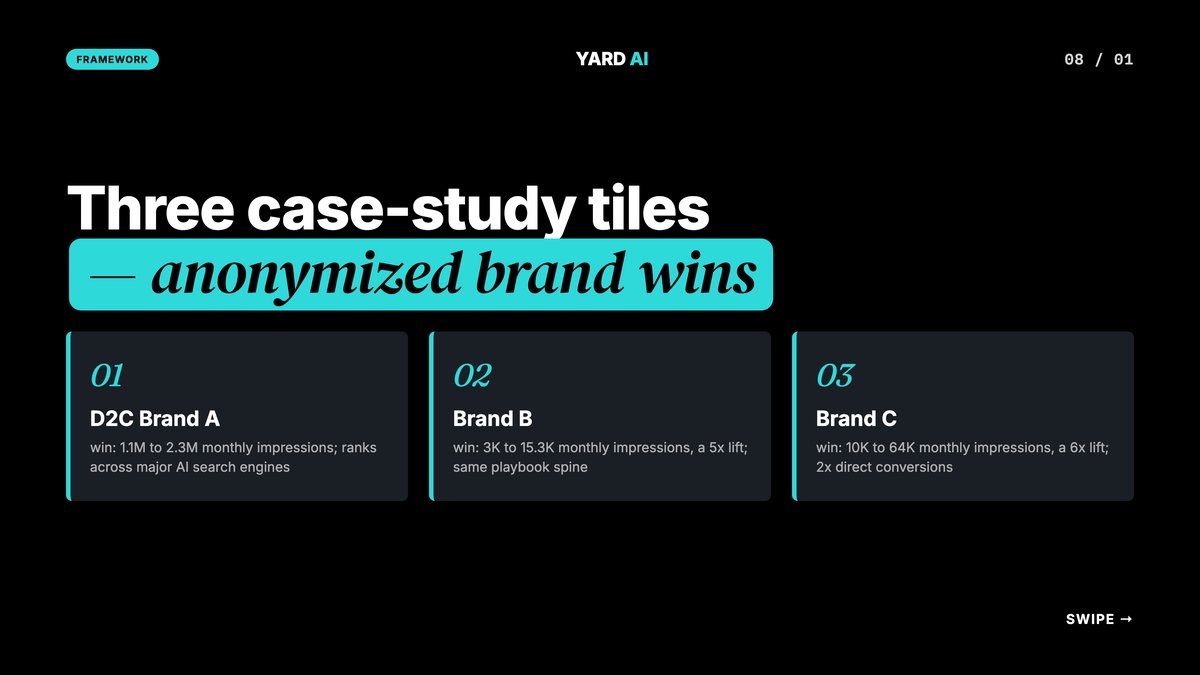

For one Indian fashion brand we mapped the kidswear category into 14 entity hubs. Each hub got a definitional page, an FAQ, schema, and 6 to 10 linked stories. Google impressions doubled from 1.1M to 2.3M per month. The brand now ranks inside ChatGPT, Perplexity, Claude, Gemini, and Bing on category queries.

Internal linking carried real weight. Each hub links out to the others. Each cluster article links back to its hub. The mesh signals topical depth to both Google and the LLMs scraping the site.

Author bylines matter too. Real names. Real bios. Real expertise. Google's helpful content guidance and the EEAT framework both reward this. AI engines rank cited authors above anonymous content.

A practical rule. Every blog gets a real human byline. Every byline links to an author page. Every author page lists credentials and past work. This costs nothing. It moves the needle.

Entity coverage and topical depth feed into something bigger. Brand recall. When a user asks ChatGPT for a kidswear pick, you want your brand surfaced by name. That only happens with depth. One blog will not do it. A library will.

How To Track AI Search Visibility

You cannot fix what you do not measure. Most brands have zero data on their AI presence.

A weekly check that takes 10 minutes works.

- Pick your top 20 commercial queries.

- Run each in ChatGPT, Perplexity, Claude, Gemini, and Google AI Overviews.

- Score: did the brand appear? Was it cited? Was the cite accurate?

- Aggregate into a 100-point visibility score.

Track it weekly. Watch the line move.

For a luxury rehab group the score moved from 4 of 100 to 71 of 100 in four months. Google impressions also moved from 25K to 98K per month. That is plus 290 percent. Average position went from 12 to 5.1. GMB calls jumped 783 percent. GMB chats jumped 438 percent.

For a sister brand the same playbook drove impressions from 3K to 15.3K per month. A 5x lift on the outpatient mental health side.

For one resort account the lift was 6x. Google impressions ran from 10K to 64K per month. Direct room nights doubled. The hotel went from invisible to cited inside Perplexity and Gemini for "wildlife resort Sariska" queries.

A few free tools help. SEMrush has an AI Overview presence report. Ahrefs added a similar tracker in 2025. Perplexity exposes citations natively in every answer. Use what you have.

Pair the tracker with a 90-day plan. Days 1 to 30: ship schema, add llms.txt, baseline the score. Days 30 to 60: add definitional openers and FAQ blocks to your top 30 pages. Days 60 to 90: build your entity hubs and audit links.

Re-run the score at day 90. The brands that follow this typically see 2 to 5x more AI citations. Multiple brands in our book have shown the same pattern.

Quick Facts: YARD AI search visibility lifts (2025-2026)

- One Indian fashion brand: Google impressions 1.1M to 2.3M per month, plus revenue 3.5x and CAC down 44 percent.

- The same brand now ranks on ChatGPT, Perplexity, Claude, Gemini, and Bing.

- a luxury rehab group: SEO impressions 25K to 98K per month, plus 290 percent. Position 12 to 5.1.

- A sister rehab brand: impressions 3K to 15.3K per month, a 5x lift.

- the resort: impressions 10K to 64K per month, a 6x lift, room nights 2x.

What NOT To Do In 2026

Three legacy tactics will hurt you under AI Overviews. We see them every week in audits.

1. Thin content rewrites.

"Top 10 Tips" listicles with 200 words of fluff per item. AI Overviews skip them. They cite the depth content instead. Google's helpful content updates flag thin pages too.

2. Keyword stuffing.

Always wrong. Now LLMs scan for natural language and discount stuffed pages on entity match. Google's helpful content updates in 2025 and 2026 doubled down on this.

3. AI-generated content farms.

One-shot AI drafts shipped at scale, no edit, no facts. Google Search Central has been clear: low-effort AI content is spam under the helpful content policy. LLMs also down-rank pages whose facts they cannot verify.

4. Blocking AI crawlers by default.

Some teams blocked GPTBot in 2024 thinking it would protect content. The result was disappearance from ChatGPT search. Most quietly reversed it. The OpenAI docs and Anthropic docs both list crawler IPs and user agents so you can allow them precisely.

5. Single-shot content per topic.

One blog, never updated, never expanded. Topical authority needs depth. AI engines reward clusters and punish orphans. If you write one page on a topic and stop, you will not be cited.

6. Skipping schema "because the page looks fine".

It does not. AI engines parse JSON-LD before they parse prose. A page without schema is a page hiding from the AI. Add Article and FAQPage schema as table stakes.

7. Chasing volume over depth.

Twenty thin blogs a month do nothing for AI search. Two strong blogs a week build entity weight. We have shipped both shapes. Depth always wins. Plan your editorial around it.

8. Ignoring author signal.

Anonymous content lost ground in 2025. Real names lift trust scores in EEAT. Add bylines. Add bios. Wire up author pages. The lift shows up in Google AI Overviews and inside ChatGPT.

9. Forgetting freshness.

AI engines downrank stale facts. Pick your top 30 pages. Set a quarterly refresh cadence. Update stats. Update screenshots. Touch the modified date in schema. AI panels pick up the freshness signal fast.

10. Treating GEO as a one-time project.

It is a habit, not a launch. We refresh entity hubs monthly on every YARD client. Citations compound. Skipping a month resets the climb.

How YARD Runs This — Subscribe And Follow Along

This is what we ship every week.

We are an AI-first growth agency. We run paid, SEO, LLM SEO, creatives, retention, and martech for multiple brands. We bundle GEO, AEO, and AIO into one service line under LLM SEO.

Our cleanest case study for this exact topic. The brand was invisible on AI search a year ago. After a full LLM SEO rebuild, it now ranks on ChatGPT, Perplexity, Claude, Gemini, and Bing. Google impressions doubled to 2.3M per month. Revenue ran 3.5x. CAC dropped 44 percent.

We publish playbooks like this one every week. New AI Overview data. New schema patterns. New tools as they ship. New screenshots from real client dashboards.

If you want the next playbook in your inbox, follow YARD at https://yardagency.ai. Subscribe to the newsletter. We send one piece of platform intel per week. No spam.

Most teams are still running the old playbook. We have already shipped the new one. Steal the patterns. Run the audit. Track your score. Or follow along while we share what works each week.

The next playbook drops in seven days. See you there.

A quick recap before you scroll away. AI Overviews now sit on 13 to 18 percent of Google queries. ChatGPT, Perplexity, Claude, Gemini, and Bing all cite brand sources. The win shifts from rank to citation. The pattern is the same across all five engines.

Stack four layers on the same content. Classic SEO for blue links. AEO for answer panels. AIO for Google AI Overviews. GEO for LLM citations. Most of the work overlaps.

Ship six things this month. Add Article and FAQPage JSON-LD on every blog. Add llms.txt at the root. Open every H2 with a one-sentence answer. Add a real FAQ block. Build entity hubs with internal links. Track your visibility score weekly.

That is the playbook. Run it. Watch the score move. Or follow YARD and steal what we ship next.

FAQ

Q: How did AI Overviews change SEO?

A: Google now answers many informational queries inside the SERP. Click-through to organic listings drops on AI Overview queries. The new win shifts from rank #1 to being cited inside the AI Overview.

Q: What is the difference between GEO, AEO, AIO and classic SEO?

A: Classic SEO targets the 10 blue links. AEO targets question-led answer panels. AIO targets Google's AI Overview surface. GEO targets citations inside ChatGPT, Claude, Perplexity, and Gemini. They overlap but each needs its own pattern.

Q: Is classic SEO dead?

A: No. AI Overviews show on roughly 13 to 18 percent of Google queries. The other 80 percent still go to organic results. Stack GEO and AEO on top of classic SEO. Do not replace it.

Q: What gets a brand cited inside an AI Overview?

A: Five signals. Clear definitional content. Specific stats with named sources. FAQ schema. Article and FAQPage JSON-LD. And topical depth across many linked pages, not one-and-done blogs.

Q: Does llms.txt matter?

A: Yes. llms.txt is the LLM crawler version of robots.txt. It tells AI bots what to index and how to cite you. The spec lives at llmstxt.org. Add it to every site you run.

Q: Should I block GPTBot and ClaudeBot?

A: No, in most cases. Blocking removes you from ChatGPT and Claude search answers that send free traffic. Block only if you are a paywalled publisher with revenue at risk.

Q: How long does it take to start ranking in AI Overviews?

A: Often 30 to 90 days with the right schema and authority signals. The brand went from invisible to ranked across ChatGPT, Perplexity, Claude, Gemini, and Bing in about six weeks.

Insights from Our Experts

Explore our latest articles on digital marketing strategies.